المواضيع الرائجة

#

Bonk Eco continues to show strength amid $USELESS rally

#

Pump.fun to raise $1B token sale, traders speculating on airdrop

#

Boop.Fun leading the way with a new launchpad on Solana.

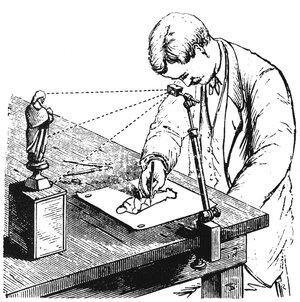

"الكاميرا lucida" هي جهاز يستخدم منشورا يحمله حديد التسليح المعدني لعرض صورة للمشهد أمامه على قطعة من الورق تحتها ، مثل جهاز عرض حديث متصل ببث مباشر للكاميرا.

ربما تم اختراعها في أوائل القرن الرابع عشر ، على الرغم من أن الحسابات المنشورة عنها لم تظهر حتى أواخر القرن السابع عشر. جزء من السبب في ذلك هو أنه من المحتمل أنهم كانوا أسرارا تجارية خاضعة لحراسة مشددة للفنانين الذين استخدموها لتحقيق درجة من الدقة كانت مستحيلة في السابق أو على الأقل يصعب القيام بها في "يد حرة" غير مساعدة.

أصبح الفنان ديفيد هوكني مهتما جدا بهذا الموضوع منذ سنوات وكتب كتابا عنه في عام 2001. كانت نظريته الأساسية هي أن التحسن الملحوظ في الدقة والواقعية يعزى بشكل مباشر إلى الاستخدام السري للكاميرا lucida (وكذلك جهاز سابق يسمى الكاميرا المظلمة).

كما أشار ، قبل تلك الفترة ، لن ترى أبدا لوحة عود في منظورها لا تبدو مشوهة وخاطئة. بينما يمكنك استخدام "قواعد المنظور" لرسم أشكال مستقيمة بسيطة بشكل واقعي ، فإن الهندسة الأكثر تعقيدا للعود كانت تتجاوز قدرة الإنسان العادية على التصوير بشكل واقعي في الفضاء. تعرف هذه النظرية باسم أطروحة هوكني فالكو.

منذ أن علمت بهذا في الكلية في أوائل عام 2000 ، قمت بتطبيق علامة النجمة عقليا على أعمال بعض الرسامين. على سبيل المثال ، بقدر ما أحترم إنجرس وكارافاجيو وأعجب بهما ، فإن الرهبة التي شعرت بها لمهاراتهما خففت من إدراك أنهما استفادا من هذا النوع من المساعدة الميكانيكية.

وبالتأكيد ، يكمن الكثير من الفن في المفهوم ، والتكوين والتأطير ، والألوان ، وضربات الطلاء ، وما إلى ذلك. لكن تلك الواقعية النابضة بالحياة المذهلة هي أكثر ما أثار إعجابي ، وقد تحطم هذا الجزء منها جزئيا على الأقل بسبب هذا الوحي. كما جعلني أحترم أكثر واقعية مايكل أنجلو النحتية (وكذلك دراساته التي من الواضح أنها رسومات مصنوعة من الحياة).

على أي حال ، فإن السبب في أنني أطرح هذا الأمر الآن هو أنني أعتقد أننا على وشك حدوث نفس النوع من الأشياء في مجالات البحث الرياضي مع ظهور نماذج مثل GPT-5 Pro.

لقد استخدمته بالفعل للقيام بما أظن أنه بحث جديد ومثير للاهتمام حقا (كما ذكرت بالتفصيل في المواضيع الأخيرة) ، وحصلنا اليوم فقط على تحديث من Sebastien Bubeck في OpenAI يوضح أن النموذج كان قادرا على إثبات نتيجة مثيرة للاهتمام في الرياضيات المعاصرة باستخدام دليل جديد ، في لقطة واحدة لا تقل عن ذلك.

لذا فإن هذا العصر الجديد فجأة علينا. لقد رأينا للتو نتيجة من علماء الكمبيوتر الصينيين الأسبوع الماضي يحطمون رقما قياسيا للفرز الأمثل الذي استمر لمدة 45 عاما.

فكرت في ذلك الوقت كيف تساءلت عما إذا كان الذكاء الاصطناعي قد تم استخدامه بطريقة ما لتوليد هذه النتيجة.

انظر أيضا الورقة الأخيرة في التغريدة المقتبسة ، والتي لها طابع مماثل من حيث أنها مفاجئة ولكنها أساسية أيضا. يبدو لي أن هذه هي السمات المميزة للنتائج التي ربما تكون قد استفادت من الذكاء الاصطناعي بطريقة ما.

الآن ، لا أريد أن أتهم هؤلاء المؤلفين بأي شيء. على الرغم من كل ما أعرفه ، فقد فعلوا كل شيء يدويا ، تماما كما فعل الرسامون في القرن الثالث عشر.

وحتى لو استخدموا الذكاء الاصطناعي لمساعدتهم ، فإننا لم نقبل بعد الأعراف حول كيفية التعامل مع ذلك: ما هي الإفصاحات المضمونة ، وكيف يجب تقسيم الائتمان والنظر فيه. يجب إعادة النظر في مفهوم التأليف بأكمله اليوم.

في الموضوع الأخير الذي قمت فيه بالتحقيق جنبا إلى جنب مع GPT-5 Pro حول استخدام نظرية الكذب في التعلم العميق ، ابتكرت المطالبات ، على الرغم من أنني لن أتمكن أبدا في غضون مليون عام من إنشاء النظرية والتعليمات البرمجية التي طورها النموذج نتيجة لتلك المطالبات. هل أحصل على الفضل في النتيجة إذا اتضح أنها أحدثت ثورة في المجال؟

ماذا عن تجربتي اللاحقة ، حيث استخدمت مطالباتي الأصلية التي كتبتها جنبا إلى جنب مع "موجه تعريفي" للحصول على GPT-5 Pro للتوصل إلى 10 أزواج أخرى من المطالبات المصممة بشكل فضفاض على غرار تجربتي ، ولكنها تتضمن فروعا مختلفة تماما من الرياضيات التي تطورت في اتجاهات مختلفة تماما.

...

الأفضل

المُتصدِّرة

التطبيقات المفضلة